Last night, Number Cruncher Politics completed the fieldwork for a nationally representative opinion poll. I’d like to explain the motivation for doing it, and some of the findings.

Since 2014, NCP been publishing analysis of elections and other people’s polls, and periodic collaborations with the media. At least, that’s the bit that readers will have seen. Most of our work goes on behind the scenes as consultancy, and in an advisory capacity to businesses, financial markets, governments, think tanks and others.

Original polling has become an increasingly requested service, and while outsourcing is always an option, it felt like the possibility of polling in house was at least worth considering, given the capabilities we've developed in that area, and having already provided technical assistance to polling companies.

But besides popular demand, polling is an interesting and important science. Although the widespread perception that polls have become less accurate has been shown to be incorrect, that’s only because of continuous improvement to meet ever increasing challenges.

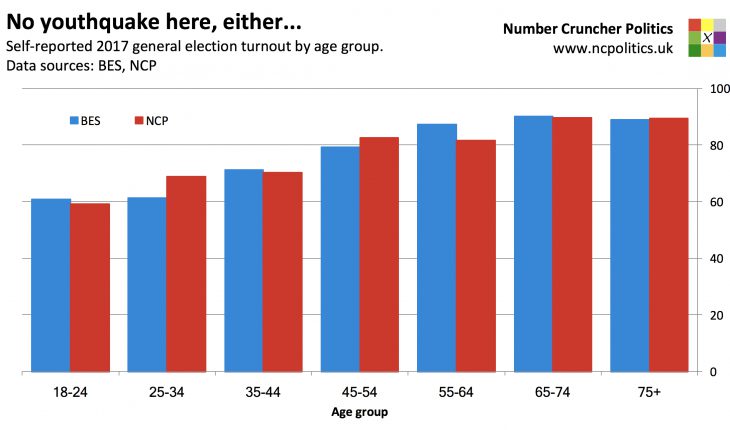

One of the biggest such challenges is achieving a representative sample, notably reaching people in low-turnout demographics who are representative of those demographics – not the ones who are atypically likely to vote – as well as reaching the older pensioners (particularly over 75s), and politically disengaged people across the board.

As the results of this experiment are likely to be of interest to readers and more widely, we will be releasing findings in the coming weeks, while conducting in-depth analysis of the data gathered.

Sample and methodology

The methodology includes a few differences from other polls, particularly in terms of sampling. We interviewed 1,037 UK eligible voters between 27th March and 5th April, with the objective of avoiding the common pitfall of reaching only the highly politically engaged.

To try to do this, we recruited respondents using two different online sampling techniques. Many were drawn from a carefully selected set of survey panels that we identified as rarely being used for political polling, so would in theory contain less of a bias towards people interested in politics.

This was supplemented by a second method – currently little used in the UK – known as river sampling. Essentially this involves a reversal of the normal process – instead of recruiting respondents to a panel and then interviewing them, this approach involves polling people immediately (and if desired, inviting them to a panel) meaning that a wider range of people than those who wish to join panels can be reached.

River sampling can be done through intercept surveys on partner sites (obviously we avoided sites with news content!) or through newer techniques, such as recruiting respondents from social media. The social media approach worked very well in earlier testing, but in the wild we found much lower response rates and higher refusal rates on key questions. This looks like it might be related to the Cambridge Analytica story, which broke right before the fieldwork started. It will be interesting to revisit this in the future.

Both panel and non-panel methods seem to have their strengths and weaknesses, and these vary across demographic and other lines, but used in tandem, they seem complimentary.

The poll was conducted UK-wide, including Northern Ireland, but for comparability to other polls and the BES, the figures quoted here – with the exception of those for the EU referendum – are for Great Britain only. And unlike most polls, the population being sampled is eligible voters, rather than all adults. This enables a like-for-like comparison with the BES, but also removes the need to reach those not eligible to vote, something that others have found problematic.

In the event we had no problem getting hold of ineligible adults – the eligibility rate on the screening question was 92.1 per cent, which is in line with current estimates from Mellon et al and the Electoral Commission.

Quotas were set for age, gender, education and region. The results begin on the next page.

(Continued, use numbered tabs below…)