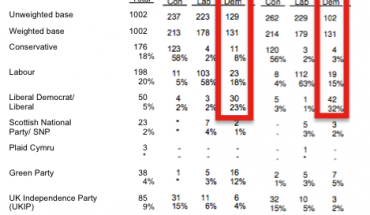

One of the biggest challenges to interpreting opinion polls for those not used to it is the concept of house effects, the systematic differences between the polling of different organisations based on their methodology. In particular, the mode (phone or online), approach to political weighting, the treatment of those refusing or saying “don’t know” and whether likelihood to vote are taken into account all have an impact.

Anthony Wells produced posted some interesting charts on UK Polling Report last year, showing how these house effects have played out. I thought I’d repeat his analysis, using the same methodology, to see how things look based on the last four months (I could have used six, but that would straddle the European Elections, which I wanted to avoid).

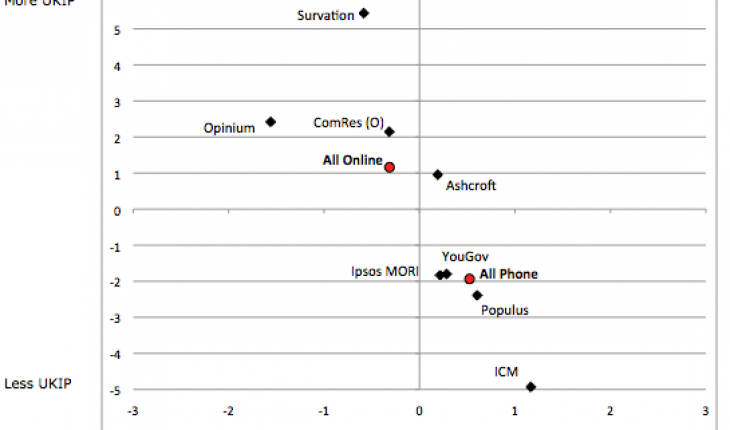

One thing that jumps out immediately is how much less variable the Labour-Conservative spreads (on the horizontal axis) are. It is true that two of the pollsters that tended to show the widest Labour leads didn’t poll in this period, so aren’t included, but 6 out of the 8 that are included fall within ±0.6 points of the average. Opinium shows slightly bigger Labour leads, possibly due to it not using political weighting. ICM looks better for the Tories, which might be down to its ‘spiral of silence’ adjustment.

On the vertical axis, we can see even bigger variability between where pollsters have UKIP than was the case in Anthony’s analysis, although that’s not too surprising given that their vote share is now higher (and 5 or 6 times what it was at the 2010 election, which makes them very tough to poll). Survation (who prompt for UKIP in their online polls) continue to show the highest levels of UKIP support, with ICM showing the lowest.

Averaging all pollsters by mode, we can see that phone pollsters tend to show the spread about a point better for the Conservatives than online pollsters, and UKIP over 3 points lower. But there is considerable variation between them – Lord Ashcroft shows far higher UKIP VI than others do by phone, while online there is an even bigger dispersion. We know why Survation is highest, but why are YouGov and Populus the lowest? It could be that they have more representative panels, but that’s just me speculating…

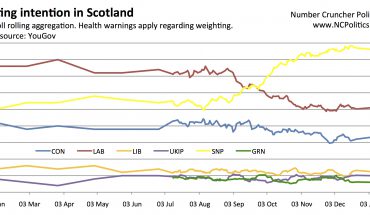

Here’s another view, for all of the parties:

And another showing the same data, but with everything on one axis:

ICM and Survation are also polls apart when it comes to the Lib Dems, but the other way round, and perhaps for the same reasons. Otherwise there isn’t too much variation. With the Greens, Lord Ashcroft tends to show their support a point or so higher than the pack, whereas Populus and Survation show it slightly lower. As with UKIP, the Greens are difficult to poll, because their support is 5 or 6 times higher than it was in 2010.

It important to remember that these house effects show each pollster relative to the average of all of them. But what it can’t do is show whether there is a systematic bias affecting all polls. I’ll return to that theme, in some detail, in the near future.