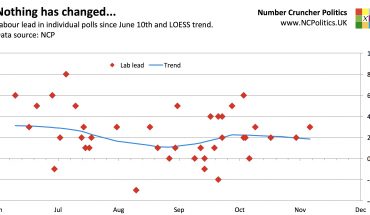

The December Guardian/ICM poll showing the Liberal Democrats taking three points off the Conservatives to record one of their highest polls of 2014 (and to leave the Tories trailing five points behind Labour) certainly got Twitter buzzing. Other polls haven’t shown any major moves in the last few days. As one of the final polls of the year (few are conducted over the festive period) and the last from gold standard pollster ICM until the new year, many people got rather excited.

But the very same reasons that polling is generally suspended over the festive period could explain this move. ICM typically poll Friday to Sunday and in 2013 they polled from December 6th to December 8th. This year the dates were 12th to 16th December. My hunch was that the publication delay could have been due low response rates over what is likely to be one of (if not the) busiest shopping weekends of the year. Since 85% of interviews are by landline, this matters. I asked both ICM and the Guardian whether this was the case and will update this post as and when I get clarification. (Update 19:14 – ICM have said that the delay was due to the length of the survey, they didn’t mention poor response rates. However the above is still relevant in respect of my other points, all of which still stand).

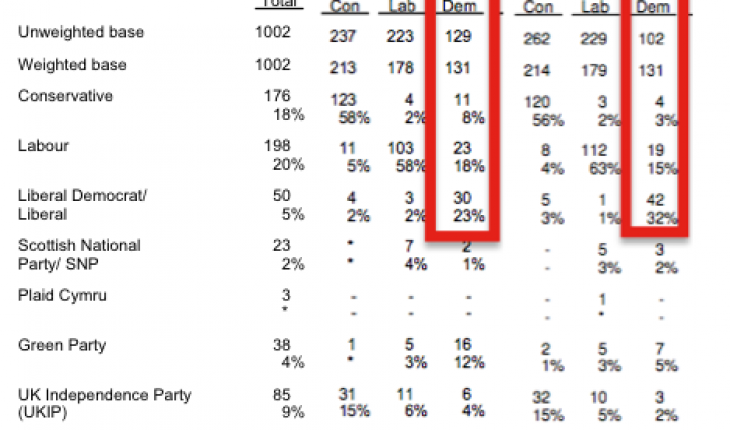

But regardless of whether poor response rates were the cause of the delay, a look at the tables shows something interesting. Like most phone pollsters, ICM weight by recalled 2010 vote, with adjustments. They work with a sample of around 1,000 voters that needs to contain 131 that voted Lib Dem in 2010. In November, their raw poll contained 129 such voters, so the weighting adjustment needed to achieve a representative sample was extremely small. In December, however, the raw sample contained only 102 such respondents, requiring a rather hefty 28% weighting-up.

|

There are two consequences of this. Firstly, large weighting adjustments of this kind magnify the sampling error in that portion of the sample, which we need to take into account when considering the statistical significance of the results as a whole.

Secondly, and potentially much more significantly, is the question of why the unweighted sample was so unrepresentative. If a lot of people were indeed out shopping, it follows that there is a significant risk those that were home to answer their landlines could have been unusually unrepresentative of the 2010 Lib Dem cell as a whole. The split (by current voting intention, with DK/refusals left in) of 3% Tory 18% Labour and 32% Lib Dem is starkly different from November’s 8%/15%/23%, which was also very much in line with what other pollsters have been showing. Once you adjust for the “Don’t knows”, this explains the bulk of the swing.

The other possibility I considered as an explanation for such a concentrated swing was that last weekend is somewhere close to the end of term time for universities. Students are a well-known example of a 2010 Lib Dem-leaning group that has since turned elsewhere, and their travels home (or other end-of-term activities) might have had an impact, but I don’t see anything in the demographics to support that.

To be clear, I am not suggesting any error on ICM’s part. Rather, (if my hunch is correct) the possibility that this might have happened – even to ICM – highlights the difficulties of polling in such a complex political environment and especially at this time of year. And statistically, one poll out of every 20 will still be a rogue, even with a perfectly unbiased sample.

The next ICM poll is due in January.