Note: The inquiry’s final report has now been published.

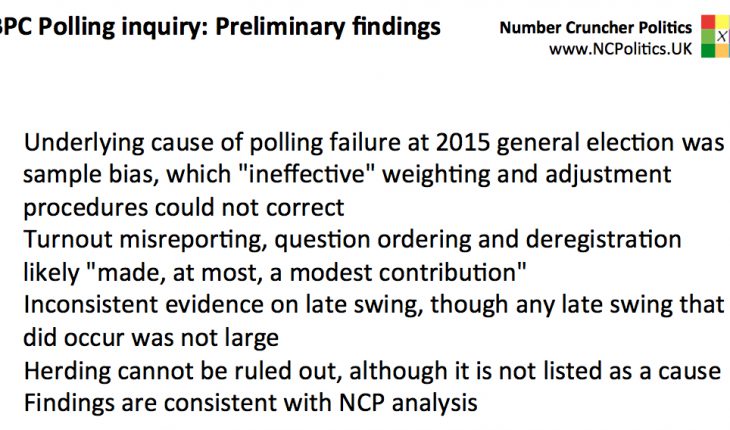

Today the British Polling Council’s inquiry into the polling failure at last year’s general election presents the following preliminary conclusions:

- The underlying culprit turns out to be unrepresentative samples. This isn’t a surprise, as there was a reasonable consensus around this (see this NCP analysis, and also the BES, BSA, YouGov, ICM and others). To quote:

Following in-depth investigations, the Inquiry panel has concluded that the primary cause of the failure of the 2015 pre-election opinion polls was unrepresentativeness in the composition of the poll samples. The methods of sample recruitment used by the polling organisations resulted in systematic over-representation of Labour voters and under-representation of Conservative voters. Statistical adjustment procedures applied by polling organisations were not effective in mitigating these errors.

- That last line is significant. We’ll get more clarity at the briefing, but that sounds to me like it’s not just the sampling, but the combination of that with ineffective weighting and adjustments. Thus the post-1992 techniques, which would normally have saved the pollsters, were thrown off by the complex voter flows between 2010 and 2015.

- This was always likely to be a risk, as I argued in my piece the day before the election. As far I can see, this aspect hasn’t really had a lot of attention, but it does explain why polls haven’t been massively wrong more often (and were right in Scotland).

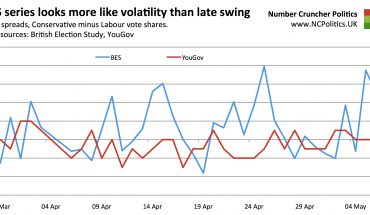

- The “other” factors ruled out as significant (or altogether) include a few things that no-one really took seriously (eg de/unregistered voters, just as with the poll tax in 1992). Also, late swing (see 1970) was ruled out early on as a major cause.

- But they also include things like differential turnout, which some pollsters were putting quite a lot of weight on (though that doesn’t necessarily mean they’re now overstating the Tory lead).

- The release doesn’t mention the classic shy Tories directly. Pollsters have been somewhat divided on this, although no-one thinks this is a major factor. More like busy Tories (and loud Labour).

- So the pollsters need more representative samples, by getting better raw samples (if possible) or by better weighting (if necessary). Some work I’ve been doing (some pollsters are looking at using) on this fixes a lot of the problem.

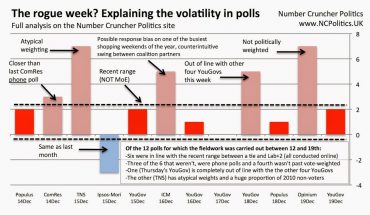

- The press release contains an interesting reference to herding. I argued in June that it couldn’t be ruled out, but that there was no evidence that it actually caused the inaccuracies. The release isn’t clear on that, so we’ll see if the inquiry has found any.

- For now I’m undecided about which EU referendum polls are right, although I’m looking very closely at it…

I’ll be at the meeting tomorrow to provide updates via Twitter. In the meantime, see other NCP articles on polling accuracy, or enjoy my film for BBC Newsnight…