The nuts and bolts

But what, in terms of what pollsters did or could have done, was the root of the problem? The answer is very likely to have been a sample imbalance, coupled with problems in the way pollsters were weighting the data, as was suggested by the BES team and others.

Raw polling data is almost never perfectly representative – it usually has too few or too many of a particular age, gender, geography, social class, political persuasion and so on. As long any bias in the data correlates with these types of measurable, observable variables, weighting the data to match them does a perfectly adequate job.

But some biases don’t match normal weighting variables. In the case of a severe partisan response bias, as often happens after set piece events like party conferences, the determining factor is simply political enthusiasm.

It’s also worth noting phone polls are now arguably as self-selecting as online polls, due to low (and falling) response rates. Indeed the difference in accuracy between telephone and online pollsters in May was negligible. The skewed demographic profile of land line usage can be adjusted for, but having very low levels of contact with certain groups creates further problems.

As widely noted, opinion poll respondents tend to be more politically interested than voters as a whole. This is a problem in and of itself, but additionally, the bias arises unevenly within opinion poll respondents. For example, Great Britain turnout at last year’s European Parliament elections was 34% compared to the 70% that told YouGov on behalf of the BES that they voted. In the general election turnout was 66%, but 91% of the panel said they voted. In both cases, claimed turnout is massively higher than actual turnout.

But the difference between claimed turnout levels is much smaller than between actual turnout levels. That implies that there were fewer people in samples who voted in the general election but not the European election than in reality, even as a proportion of the population (25% versus 31%). As a proportion of just general election voters, they were even more severely undersampled.

These people matter – they are less politically engaged than people that vote in second-order elections, but they still vote, and in 2010 and 2015 they voted Conservative.

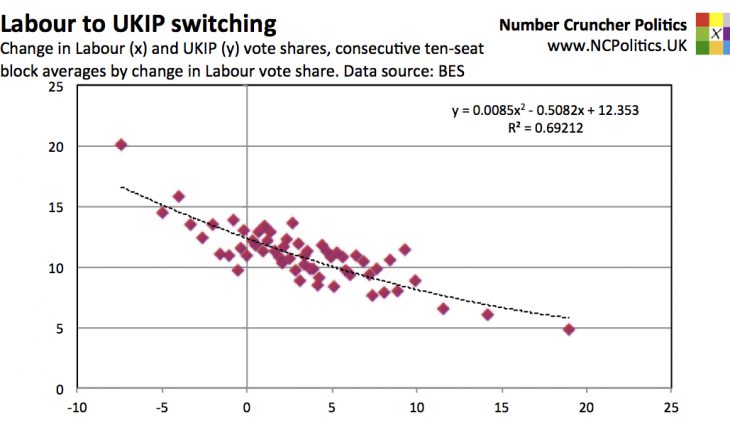

By contrast, on this measure those that had voted for one of the coalition parties in 2010 but subsequently changed their vote to Labour or UKIP were among those overrepresented in the polls before the election. Given the volume of data, it may yet transpire that there is some magic variable, suitable for weighting, that distinguishes these “switchers” from others that voted the same way at the last election. But at the moment, the only obvious difference is that they changed their vote.

What about the shy Tories? In my pre-election piece, I lazily (and in hindsight, regrettably) used the term in the title to describe the statistical pattern of the Conservatives consistently beating the polls. In my defence I did make it clear in the piece what I meant:

…the so-called shy Tory factor is really a statistical pattern, for which shyness or dishonesty are merely possible explanations. As will be discussed, there is evidence for the various theories as to its causes, but we don’t know, and may not know, how big their impacts are (or were) individually. We just know that polls have shown evidence of bias. Thus the terms “shy Tory factor” and “shy Tory effect” are used in a far broader sense than their literal meanings.

Shy Tories in the stricter sense – people being dishonest or cognitively dissonant when participating in polls – now seem to have been a smaller factor than some pollsters had initially thought. But it doesn’t seem likely that the shy Tories have really gone away – they may just have evolved the “less likely to take opinion polls but more likely actually to vote” Tories.

Differential turnout (the so-called “lazy Labour” problem) was a contributory factor, but not a particularly big one.

(Continued, please navigate using numbered tabs…)